I am a staff research scientist at NIO USA, solving dexterous robot manipulation.

My research interests lie in learning and search, computational design, and large-scale system evaluation for embodied intelligence.

Previously, I worked as an early researcher at Skild AI, a unicorn startup co-founded by Prof. Deepak Pathak and Prof. Abhinav Gupta from the Carnegie Mellon University (CMU). I obtained my Ph.D. degree in Computer Science from the University of Southern California (USC) advised by Prof. Stefanos Nikolaidis. During my Ph.D., I am grateful to collaborate with Prof. Daniel Seita, Prof. Yue Wang, Prof. Joseph J. Lim , and Prof. Sven Koenig. Before starting my PhD, I received my Master's degree from USC advised by Prof. Gaurav Sukhatme and Prof. Stefan Schaal. I got my bachelor's degree in Bioengineering from Zhejiang University (ZJU), where I started my study on Biology and AI.

* Research collaboration: contact me if you are interested at collaborating on enabling human-like dexterous robot manipulation!

News

- (Dec 2025) I joined NIO USA as a staff research scientist, solving dexterous robot manipulation!

- (Sep 2025) "Learning to Design and Control Hands with Human-Level Dexterity" submitted to ICRA 2026!

- (Aug 2025) "ManipBench: Benchmarking Vision-Language Models for Low-Level Robot Manipulation" accepted at CoRL 2025. Stay tuned for our official benchmark release!

- (Jul 2025) Recevied $2000 grant from Lambda's research grant program. Thanks Lambda for supporting our research on dexterous robot manipulation!

- (September 2024) "GPT-Fabric: Folding and Smoothing Fabric by Leveraging Pre-Trained Foundation Models" accepted at ISRR 2024.

- (July 2024) Received $5000 grant from OpenAI Researcher Access Program. Thanks OpenAI for supporting our research on embodied foundation models!

- (May 2024) "A concept for integrating robotic systems in an underground roof support machine" accepted at Journal of Industrial Safety.

- (April 2024) I successfully defended my PhD thesis "Planning and Learning for Long-horizon Collaborative Manipulation Tasks"!

- (August 2023) "Surrogate Assisted Generation of Human-Robot Interaction Scenarios" accepted at CoRL 2023.

- (August 2023) "Multi-Robot Geometric Task-and-Motion Planning for Collaborative Manipulation Tasks" accepted at AURO.

- (April 2023) "PATO: Policy Assisted TeleOperation for Scalable Robot Data Collection" accepted at RSS 2023.

Research

My current research goal is to enable robots to intelligently perform long-horizon, complex (e.g., deformable object, collaborative, dexterous...) manipulation tasks in unstructured human environments (e.g., kitchens, underground mines...).

To achieve my research goal, I am currently interested in developing fundamental learning, search, design, and evaluation algorithms for robotics.

Selected Publications

|

Enyu Zhao*, Vedant Raval*, Hejia Zhang*, Jiageng Mao, Zeyu Shangguan, Stefanos Nikolaidis, Yue Wang, Daniel Seita Conference on Robot Learning (CoRL), 2025 [PDF] [Project] We propose ManipBench, a VLM benchmark for evaluating VLMs' low-level manipulation reasoning capabilities. Rather than evaluating foundation models by executing full manipulation trajectories, we introduce a multi-choice question framework that enables efficient and systematic evaluation. |

|

Hejia Zhang, Shao-Hung Chan, Jie Zhong, Jiaoyang Li, Peter Kolapo, Sven Koenig, Zach Agioutantis, Steven Schafrik, Stefanos Nikolaidis Autonomous Robots (AURO), 2023 The design of the autonomous roof bolting system: Technical Report for Alpha Foundation for the Improvement of Mine Safety and Health [PDF] This work is an extended version of our previous work on multi-robot geometric task-and-motion planning (MR-GTAMP). We conduct an application study on the roof-bolting task, which is an essential operation within the underground mining cycle. We conduct additional scalability evaluation experiments. We substantially expand the description of the previous MR-GTAMP framework. |

|

Shivin Dass*, Karl Pertsch*, Hejia Zhang, Youngwoon Lee, Joseph J. Lim, Stefanos Nikolaidis Robotics: Science and Systems (RSS), 2023 Also at Pre-training for Robot Learning @ CoRL 2022. [Code] [PDF] [Project] We enable scalable robot data collection by assisting human teleoperators with a learned policy. Our approach estimates its uncertainty over future actions to determine when to request user input. In real world user studies we demonstrate that our system enables more efficient teleoperation with reduced mental load and up to four robots in parallel. |

|

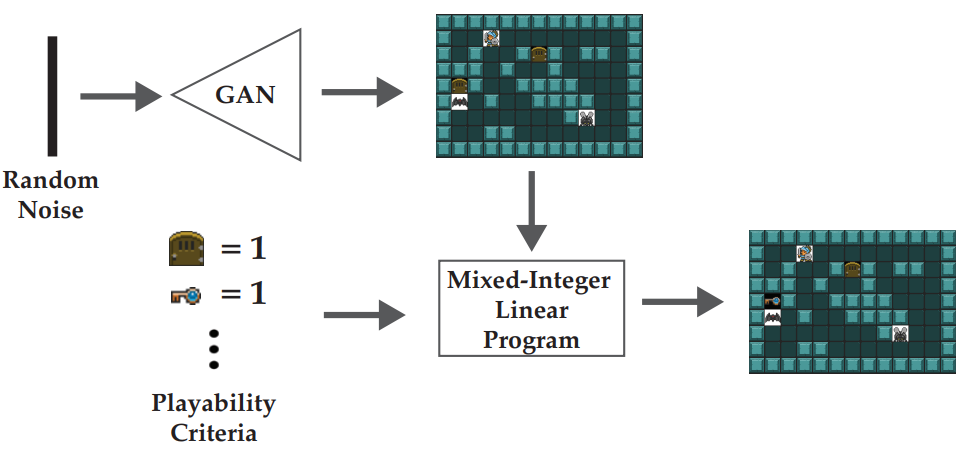

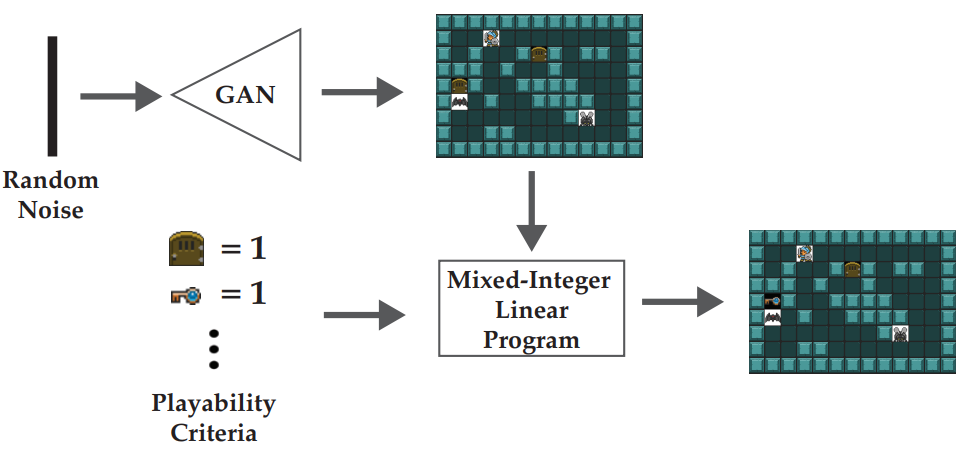

Hejia Zhang*, Matthew Fontaine*, Amy Hoover, Julian Togelius, Bistra Dilkina, Stefanos Nikolaidis AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment (AIIDE), 2020 (Oral Presentation; 25% acceptance rate) [BibTeX] [Code] [PDF] We propose a “generate-then-repair” framework for automatic generation of playable levels adhering to specific styles. |

|

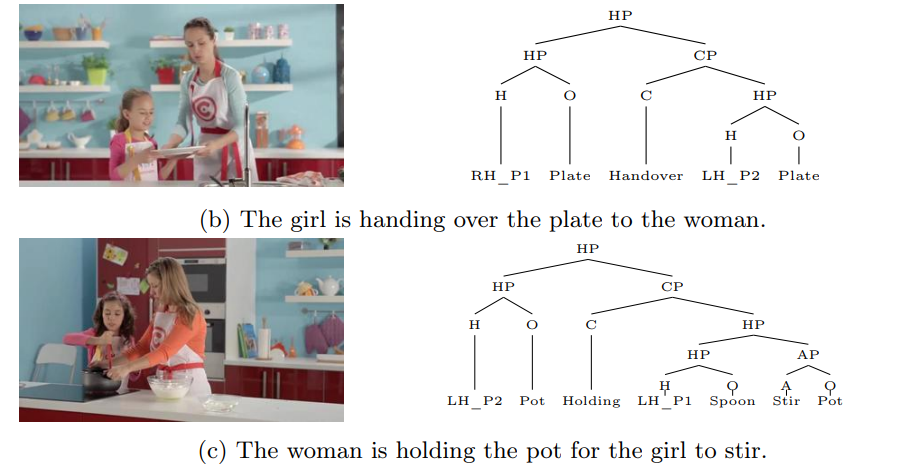

Hejia Zhang, Jie Zhong, Stefanos Nikolaidis Emergent Behaviors in Human-Robot Systems @ RSS, 2020 Featured as Paper of the Month by Kinova Robotics. [PDF] [Slides] [Talk] Previous work has shown that the space of human manipulation actions has a linguistic, hierarchical structure that relates actions to manipulated objects and tools. Building upon this theory of language for action, we propose a system for understanding and executing demonstrated action sequences from full-length, real-world cooking videos on the web. |

Learning and Search for Robot Manipulation

"Search and learning are the two most important classes of techniques for utilizing massive amounts of computation in AI research." (Richard Sutton, The Bitter Lesson.)

|

Hejia Zhang, Stefanos Nikolaidis Under Review [Coming Soon!] We propose a framework for guiding planners to relocate objects to feasible and effective placements such that the robot can efficiently move objects of interest to their goal poses specified by humans. |

|

Vedant Raval*, Enyu Zhao*, Hejia Zhang, Stefanos Nikolaidis, Daniel Seita International Symposium of Robotics Research (ISRR), 2024 [PDF] [Project] We utilize pre-trained large language models to generate robot actions for fabric smoothing and folding tasks. |

|

Hejia Zhang, Shao-Hung Chan, Jie Zhong, Jiaoyang Li, Peter Kolapo, Sven Koenig, Zach Agioutantis, Steven Schafrik, Stefanos Nikolaidis Autonomous Robots (AURO), 2023 The design of the autonomous roof bolting system: Technical Report for Alpha Foundation for the Improvement of Mine Safety and Health [PDF] This work is an extended version of our previous work on multi-robot geometric task-and-motion planning (MR-GTAMP). We conduct an application study on the roof-bolting task, which is an essential operation within the underground mining cycle. We conduct additional scalability evaluation experiments. We substantially expand the description of the previous MR-GTAMP framework. |

|

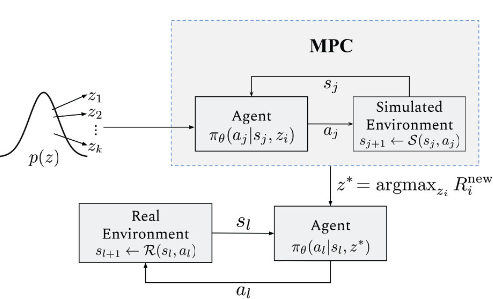

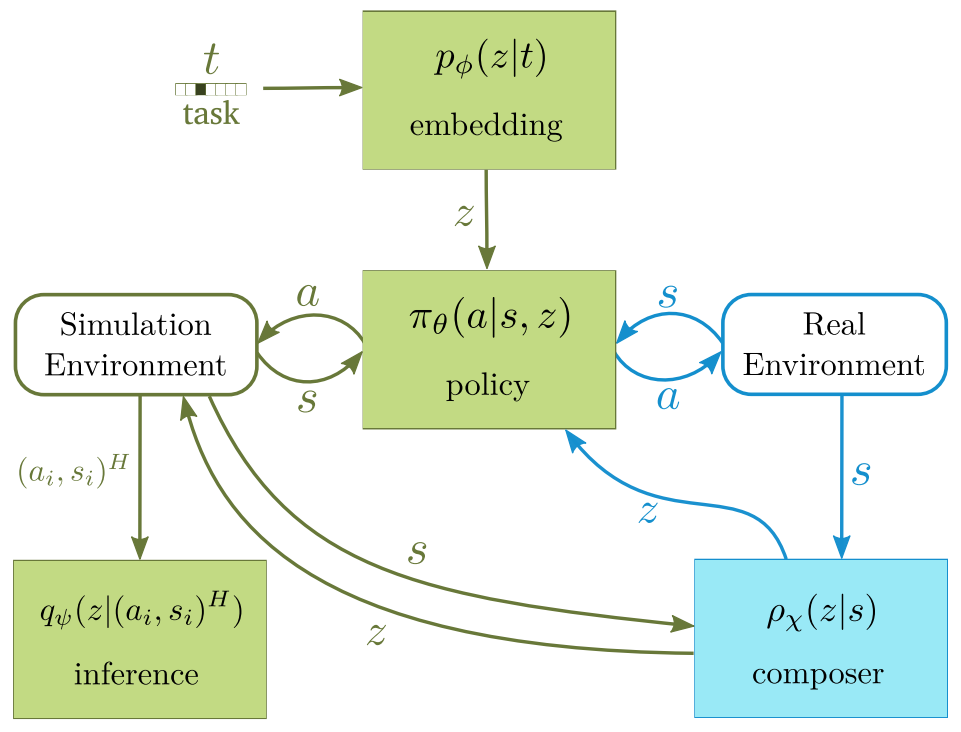

Shivin Dass*, Karl Pertsch*, Hejia Zhang, Youngwoon Lee, Joseph J. Lim, Stefanos Nikolaidis Robotics: Science and Systems (RSS), 2023 Also at Pre-training for Robot Learning @ CoRL 2022. [Code] [PDF] [Project] We enable scalable robot data collection by assisting human teleoperators with a learned policy. Our approach estimates its uncertainty over future actions to determine when to request user input. In real world user studies we demonstrate that our system enables more efficient teleoperation with reduced mental load and up to four robots in parallel. |

|

Hejia Zhang, Shao-Hung Chan, Jie Zhong, Jiaoyang Li, Sven Koenig, Stefanos Nikolaidis IEEE International Conference on Automation Science and Engineering (CASE), 2022 Also at SCR 2022. [PDF] [Slides] We address multi-robot geometric task-and-motion planning (MR-GTAMP) problems in synchronous, monotone setups. The key insight is that pre-computed manipulation capabilities of individual robots can be used to guide multi-robot planning. |

|

Hejia Zhang, Jie Zhong, Stefanos Nikolaidis Emergent Behaviors in Human-Robot Systems @ RSS, 2020 Featured as Paper of the Month by Kinova Robotics. [PDF] [Slides] [Talk] Previous work has shown that the space of human manipulation actions has a linguistic, hierarchical structure that relates actions to manipulated objects and tools. Building upon this theory of language for action, we propose a system for understanding and executing demonstrated action sequences from full-length, real-world cooking videos on the web. |

|

Hejia Zhang, Po-Jen Lai, Sayan Paul, Suraj Kothawade and Stefanos Nikolaidis International Symposium on Robotics Research (ISRR), 2019 [BibTeX] [PDF] We present a system for knowledge acquisition of collaborative manipulation action plans that outputs commands to the robot in the form of visual sentence. |

|

Ryan Julian, Eric Heiden, Zhanpeng He, Hejia Zhang, Stefan Schaal, Joseph J. Lim, Gaurav S. Sukhatme, Karol Hausman International Journal of Robotics Research (IJRR), 2020 Also at Deep RL Workshop @ NeurIPS 2018. [PDF] This is an extended verion of our previous work on sim-to-real transfer. We show an algorithm which allows our sim-to-real method to perform long-horizon tasks never seen in simulation, by intelligently sequencing short-horizon latent skills. |

|

Hejia Zhang, Eric Heiden, Stefanos Nikolaidis, Joseph J. Lim, Gaurav S. Sukhatme Southern California Robotics Symposium (SCR), 2019 [PDF] [Poster] [Slides] [Video] In this paper, we introduce a state transition model (STM) that generates joint-space trajectories by imitating motions from expert behavior. Given a few demonstrations, we show in real robot experiments that the learned STM can quickly generalize to unseen tasks and synthesize motions having longer time horizons than the expert trajectories. |

|

Ryan Julian*, Eric Heiden*, Zhanpeng He, Hejia Zhang, Stefan Schaal, Joseph J. Lim, Gaurav S. Sukhatme, Karol Hausman International Symposium on Experimental Robotics (ISER), 2018 [BibTeX] [PDF] We present a novel solution to the problem of simulation-to-real transfer, which builds on recent advances in robot skill decomposition. |

Computational Robot Hardware Design

|

Eric Heiden*, David Millard*, Hejia Zhang, Gaurav S. Sukhatme Technical Report [PDF] We propose a differentiable physics engine, that allows for efficient, accurate inference of physical properties of rigid-body systems. We present experiments showing computational task-based robot design. |

Large-scale Robot System Evaluation

|

Enyu Zhao*, Vedant Raval*, Hejia Zhang*, Jiageng Mao, Zeyu Shangguan, Stefanos Nikolaidis, Yue Wang, Daniel Seita Conference on Robot Learning (CoRL), 2025 [PDF] [Project] We propose ManipBench, a VLM benchmark for evaluating VLMs' low-level manipulation reasoning capabilities. Rather than evaluating foundation models by executing full manipulation trajectories, we introduce a multi-choice question framework that enables efficient and systematic evaluation. |

|

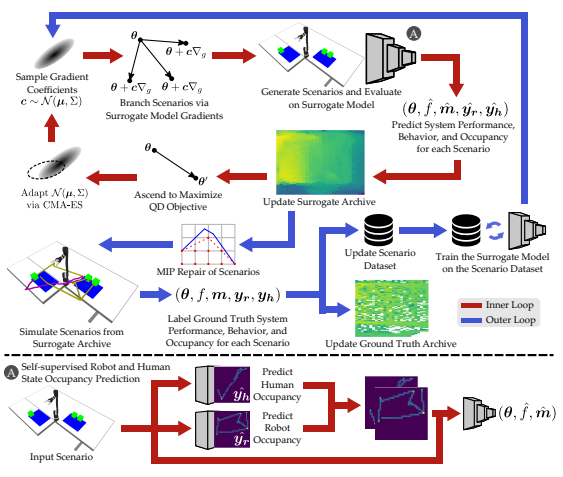

Varun Bhatt, Heramb Nemlekar, Matthew C. Fontaine, Bryon Tjanaka, Hejia Zhang, Ya-Chuan Hsu, Stefanos Nikolaidis Conference on Robot Learning (CoRL), 2023 (Oral Presentation; 6.6% acceptance rate) [PDF] We propose augmenting scenario generation systems with surrogate models that predict agent behaviors. We show that surrogate assisted scenario generation efficiently synthesizes diverse datasets of challenging scenarios. |

|

Hejia Zhang*, Matthew Fontaine*, Amy Hoover, Julian Togelius, Bistra Dilkina, Stefanos Nikolaidis AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment (AIIDE), 2020 (Oral Presentation; 25% acceptance rate) [BibTeX] [Code] [PDF] We propose a “generate-then-repair” framework for automatic generation of playable levels adhering to specific styles. |

Demos

For more demo videos, please visit my YouTube Channel.